Data Quality

- Home

- Data Quality

When it comes to data quality, how good is good enough

The hallmark of data quality is how well data supports the context in which it’s consumed. Your legal department, for example, may use “Informatica Company” while your finance department uses “INFA,” and both records are of equal quality.

Quality is a relative and never-ending judgment, one that needs to be defined by the business (or business unit) that’s consuming the data. An essential element of holistic data governance, trustworthy data serves critical business needs across the enterprise—from legal to finance to marketing and beyond.

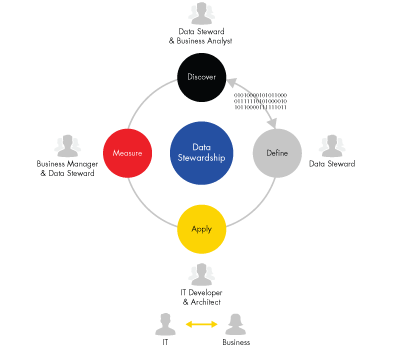

Driving data quality requires a repeatable process that includes:

- Defining the specific requirements for “good data,” wherever it’s used.

- Establishing rules for certifying the quality of that data.

- Integrating those rules into an existing workflow to both test and allow for exception handling.

- Continuing to monitor and measure data quality during its lifecycle (usually done by data stewards).

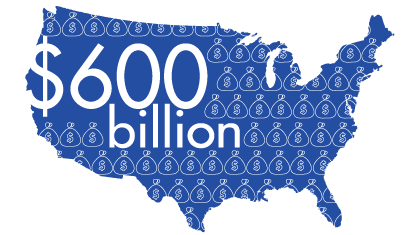

Bad data cost U.S. business $600 billion a year, according to the research firm TDWI.

Why data quality matters

Why does quality data matter? An often-cited statistic puts the cost of “bad” data to U.S. businesses at $600 billion annually1. Whether bad data causes you to lose revenue, damages your brand, reduces your competitive edge, or simply results in bad decision-making, the costs are significant.

When looking for a data quality solution, we recommend you put the following at the top of your “must-haves” list:

- Reliability: The demand for reliable data quality is a critical—and persistent—need. Your business will want a vendor with a successful track record as well as a comprehensive approach to ensure a holistic data quality process that supports the key steps around discovering, defining, applying, monitoring, and measuring progress.

- Portability: Whether you’re using legacy mainframes or the latest technology, you’ll want a data quality tool that can evolve as your business does. That means the data quality solution you deploy should allow you to scale across platforms—from on-premise to the cloud, in Hadoop, or in a hybrid ecosystem.